There’s been a quiet but profound shift inside Amazon Connect — and many businesses haven’t noticed it yet.

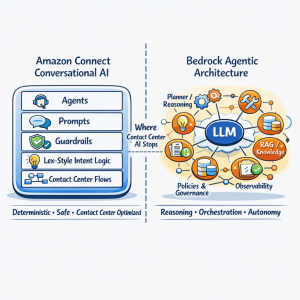

Between AWS re:Invent 2025 and the January 2026 rollout, Conversational AI, AI Agents, Prompts, and Guardrails were introduced into Amazon Connect, fundamentally changing how contact centers can be designed and operated.

This isn’t just a feature update. It’s a platform shift.

From Amazon Q to Conversational AI Agents

Many organizations experimented with earlier AI features like Amazon Q or Bedrock-powered Q&A integrations with Lex bots. These were powerful — but often required deep engineering effort, custom orchestration, and careful prompt management.

The new Conversational AI experience inside Amazon Connect changes that dramatically.

- AI Agents are now first-class citizens in the Connect admin interface

- Prompt engineering is structured and managed, not hidden in code

- Guardrails are built-in, not bolted on

- Knowledge Bases integrate seamlessly for real-time answers

In short: what used to require a team of AI engineers can now be configured directly within the contact center.

What Actually Changed?

If you haven’t logged into your Connect instance recently, you may have missed it — the admin portal itself has evolved.

You’ll now see:

- Dedicated AI Agent configuration

- Structured Prompt design (Identity, Behavior, Procedures)

- Tool-based orchestration (Retrieve, Escalate, Complete)

- Integrated Knowledge Bases powered by modern retrieval

This is not just UI polish — it represents a shift toward agentic AI inside the contact center.

Why This Matters for Business

This release bridges a long-standing gap:

Before:

- AI was experimental

- Required custom Bedrock + Lambda + orchestration

- Difficult to operationalize at scale

Now:

- AI is operational inside the contact center

- Configurable by architects, not just ML engineers

- Integrated directly into customer journeys

This enables a new class of capability:

- Answer complex customer questions instantly

- Guide conversations with structured AI behavior

- Escalate intelligently when needed

- Reduce handle time while improving CX

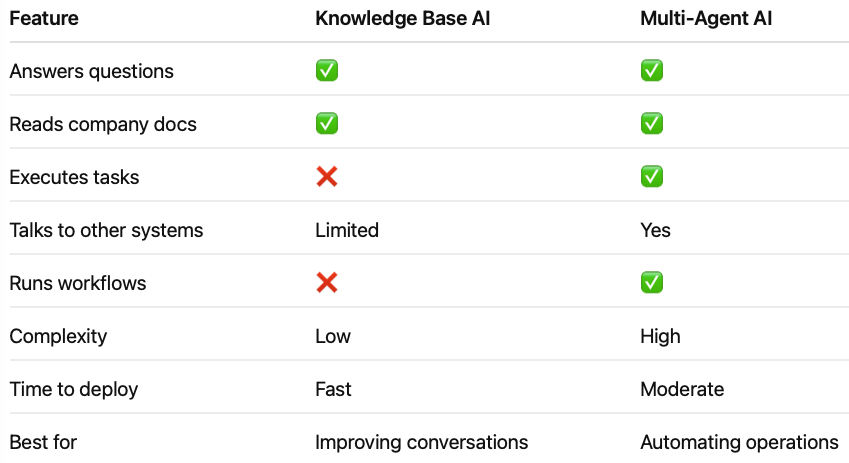

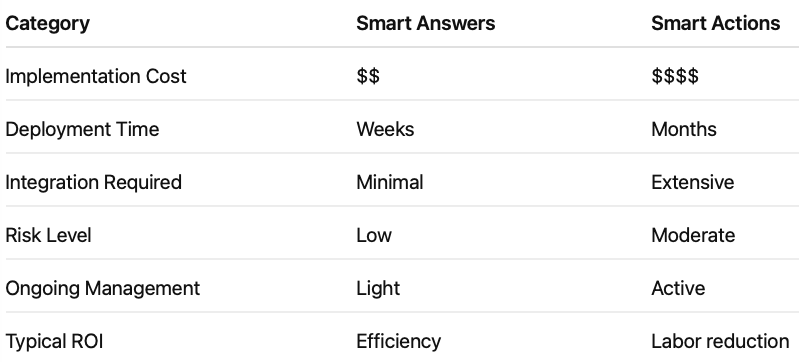

AI for Answers vs AI for Action

This is where the distinction becomes critical.

AI for Answers (Knowledge Base driven):

- FAQ handling

- Policy explanations

- Product information

AI for Action (Agent + Tools):

- Order status lookups

- Appointment scheduling

- Account updates

The new Amazon Connect AI Agents allow you to move beyond simple answers and into guided, outcome-driven interactions.

The Real Breakthrough: Structured Prompts + Guardrails

One of the biggest challenges in generative AI has been consistency and control.

This release introduces a structured approach to prompts:

- Identity – Who the agent is

- Behavior – How it communicates

- Procedures – What it must do

- Restrictions – What it must never do

- Escalation Rules – When to involve a human

Combined with guardrails, this makes AI predictable, safe, and business-ready.

What This Means for Your Contact Center

Organizations that adopt this early will see immediate advantages:

- Handle more customers without adding staff

- Improve first-call resolution

- Empower agents with better information

- Reduce operational costs

More importantly, it changes the role of the contact center from a cost center to a customer experience engine.

Final Thought

This is one of the most significant updates to Amazon Connect since its launch.

And yet — many businesses don’t even know it’s there.

If you’re still thinking about AI as a chatbot or FAQ tool, you’re already behind.

The future is AI-driven interaction — not just AI-generated answers.

— DrVoIP

Where IT meets AI — in the cloud.